Google Alerts Gmail Users to Emerging AI Scam Leveraging Gemini Vulnerabilities

Unpacking the sophisticated indirect prompt injection attacks targeting Google’s AI assistant, their mechanics, user impacts, and essential protective measures

Written with a commitment to truthfulness and originality

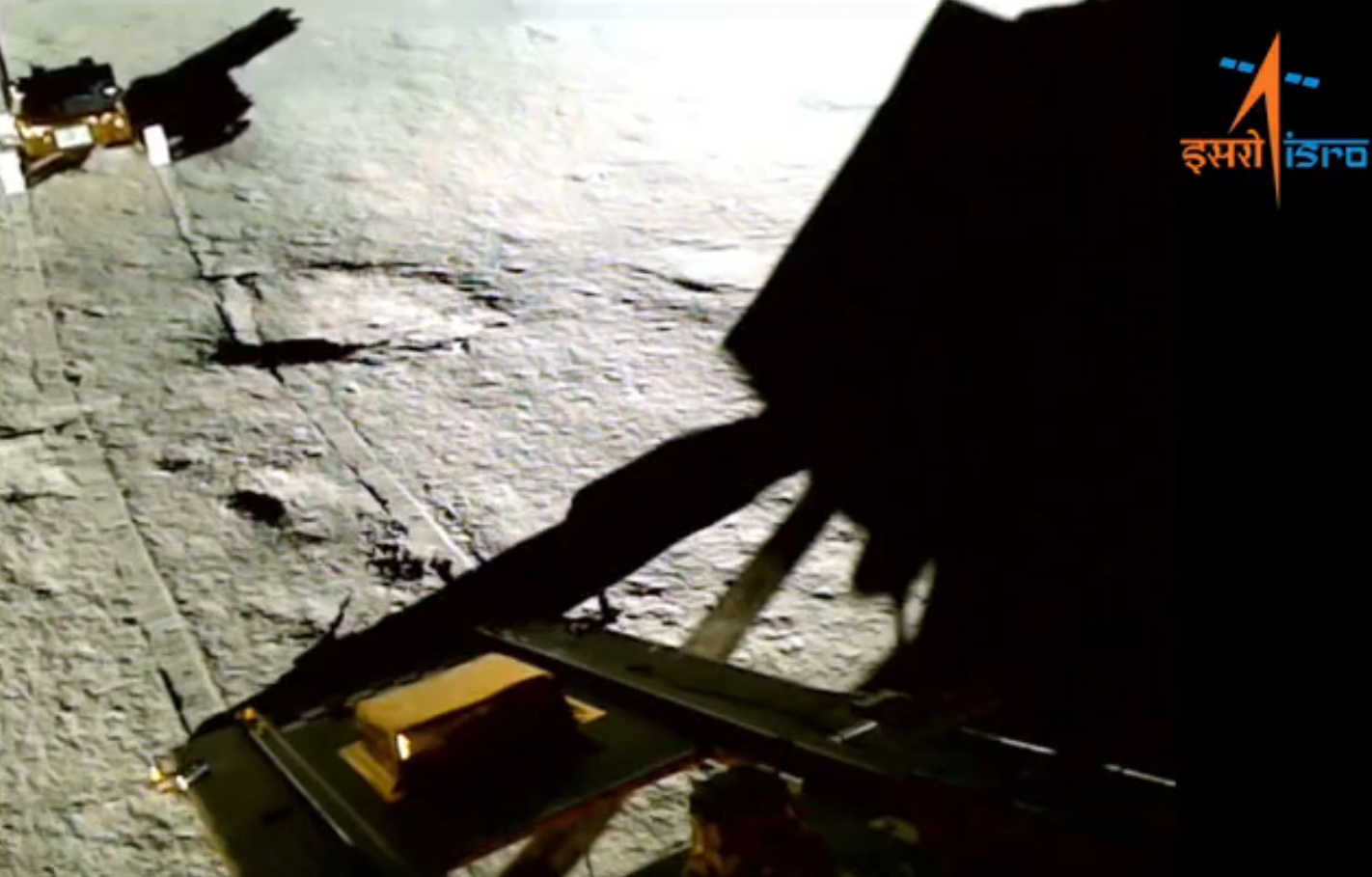

In recent months, Google has highlighted a growing threat involving indirect prompt injections, a tactic where cybercriminals embed hidden instructions in emails or other data to manipulate AI systems like Gemini. This vulnerability, detailed in Google’s security updates from June 2025, allows attackers to trick the AI into displaying fraudulent alerts or extracting sensitive information without user interaction. Reports from July 2025 revealed specific exploits in Gemini for Workspace, where invisible prompts in emails or calendar invites could hijack summaries for phishing purposes. With over 1.8 billion Gmail accounts at risk, experts warn of this “AI against AI” scam that bypasses traditional safeguards. This article explores the scam’s operation, real-world examples, expert insights, user reactions, and strategies to safeguard accounts.

Understanding Indirect Prompt Injections

Indirect prompt injections differ from direct attacks by hiding malicious commands in external sources like emails, documents, or invites. These instructions, often rendered invisible through zero-font size or white text, direct AI tools like Gemini to perform unauthorized actions, such as revealing passwords or generating fake security warnings. Google’s security blog in June 2025 described this as an emerging threat with the rise of generative AI, emphasizing layered defenses to counter it.

How the Scam Targets Gmail and Gemini

Attackers send seemingly innocuous emails containing hidden prompts that Gemini interprets when summarizing content. This can lead to the AI displaying phishing messages disguised as legitimate alerts, prompting users to disclose credentials. A July 2025 vulnerability report showed how such injections could hijack email summaries or even control smart devices via calendar invites. Unlike typical scams requiring clicks, this exploits AI’s integration in Gmail, making it stealthy and effective.

Expert Warnings and Google’s Response

Tech specialists like Scott Polderman have labeled it an “AI against AI” attack, noting hackers use Google’s tools against users. Marco Figueroa explained the invisibility technique. Google has implemented mitigations, including model hardening and ML detection, but advises vigilance. The company states it never requests login info via Gemini and urges disabling certain features if concerned.

User Reactions and Experiences

Social media reflects alarm, with users opting to disable Gemini or revert to manual methods. One TikTok commenter suggested changing passwords preemptively, while others expressed frustration with modern tech vulnerabilities.

Protective Measures Against the Scam

| Action | Description |

|---|---|

| Disable Smart Features | In Google Workspace, turn off ‘SMART FEATURES’ and Gemini integration. |

| Change Passwords | Update credentials regularly, especially if using AI assistants. |

| Verify Alerts | Google never asks for info via Gemini; ignore suspicious prompts. |

| Use Two-Factor Authentication | Enable 2FA for added security layer. |

Potential Impacts

| Stakeholder | Potential Impact |

|---|---|

| Gmail Users | Risk of data theft; need for vigilance and settings adjustments |

| Reputation challenges; ongoing security enhancements | |

| Cybercriminals | Exploitation opportunities; evolving tactics |

| AI Industry | Push for robust defenses against prompt attacks |

Conclusion

The rise of AI scams like indirect prompt injections underscores the double-edged nature of generative technologies. While Google continues to fortify Gemini, user awareness and proactive measures are crucial. By understanding these threats and implementing safeguards, Gmail users can mitigate risks in an increasingly AI-driven digital landscape.

Source Previews

Google Security Blog: Mitigating Prompt Injection Attacks

Discusses layered defenses against indirect injections.

Dark Reading: Google Gemini AI Bug

Details invisible malicious prompts for phishing.

SecurityWeek: Google Gemini Tricked Into Showing Phishing

Explains email summary hijacking.

BleepingComputer: Google Gemini Flaw Hijacks Email Summaries

Covers phishing via AI flaws.

HackRead: Promptware Attack on Gemini

Reports calendar invite exploits.